Grok 4.3 on AI Gateway

Grok 4.3 is now available on Vercel AI Gateway. The model has a December 2025 knowledge cutoff and a 1M token context window. The model has improvements in accuracy, tool calling, and instruction following.

To use Grok 4.3, set model to xai/grok-4.3 in the AI SDK.

import { streamText } from 'ai';

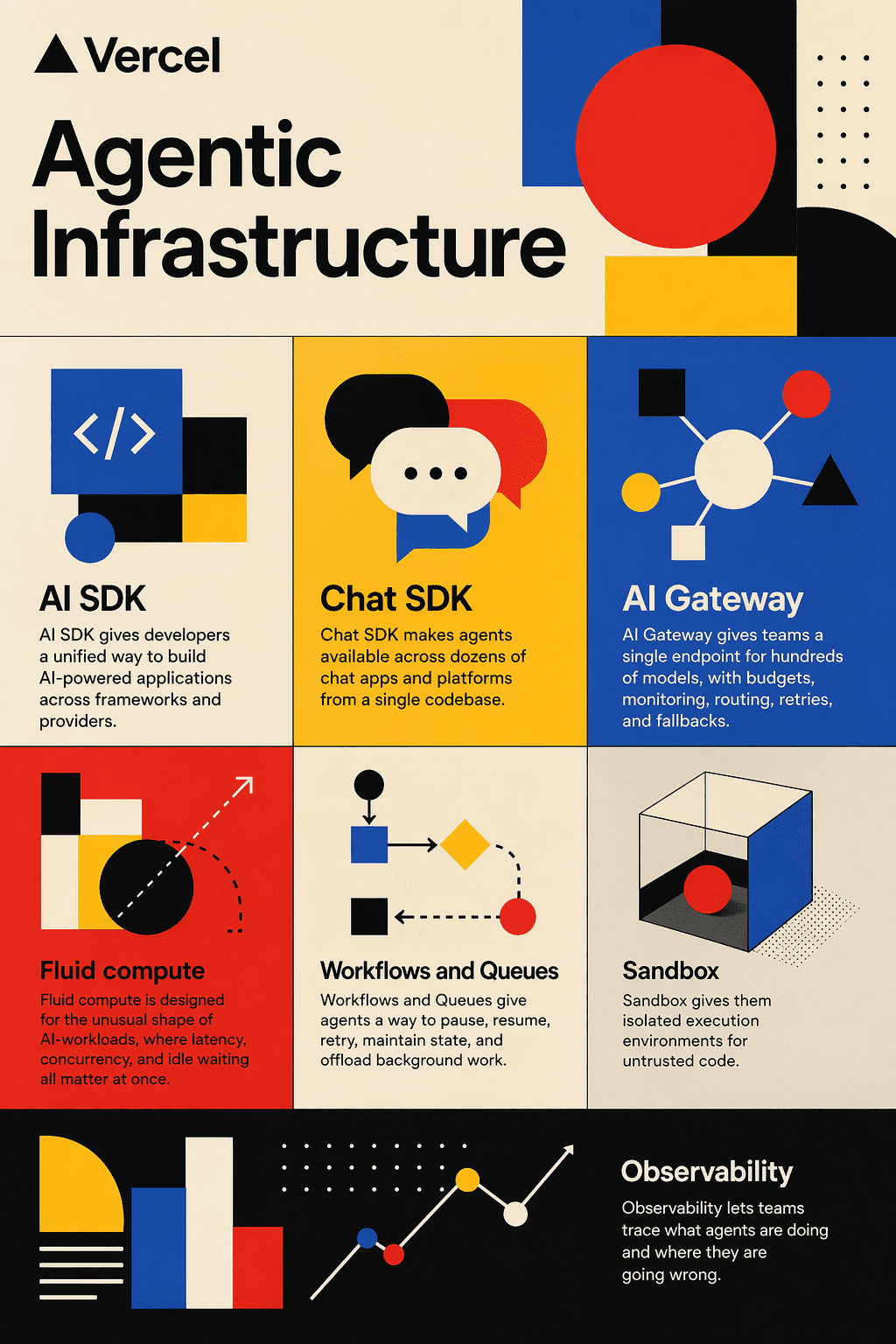

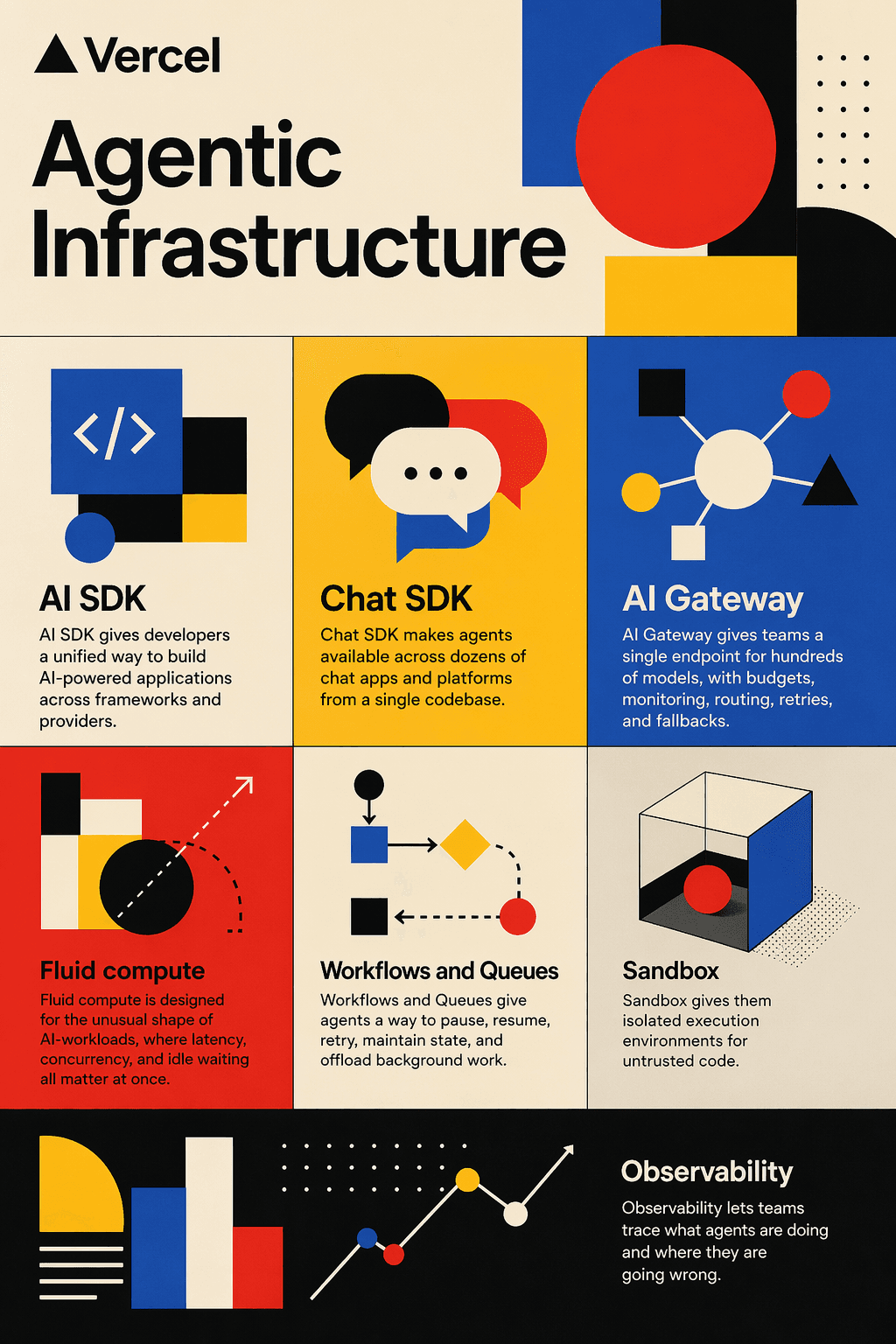

const result = streamText({ model: 'xai/grok-4.3', prompt: 'Analyze this dataset and summarize the key trends.',});AI Gateway provides a unified API for calling models, tracking usage and cost, and configuring retries, failover, and performance optimizations for higher-than-provider uptime. It includes built-in custom reporting, observability, Bring Your Own Key support, and intelligent provider routing with automatic retries.

Learn more about AI Gateway, view the AI Gateway model leaderboard or try it in our model playground.